The world's most biodiverse ecosystems often exist in its most remote locations—places where traditional monitoring technologies fail due to lack of electricity, internet connectivity, and technical infrastructure. Yet these are precisely the regions where real-time biodiversity data matters most. In 2026, a technological revolution is emerging that could change everything: Optical AI Chips and Low-Power TinyML in Remote Biodiversity Surveys: 2026 Deployment Strategies for Underserved Regions represent a breakthrough approach to conservation monitoring that works where conventional systems cannot.

According to the latest horizon scan analysis, low-power Tiny Machine Learning (TinyML) devices that operate without internet connections and optical AI chips requiring minimal energy have been identified as emerging technologies poised to enable real-time biodiversity detection in remote landscapes.[1] However, the promise of these technologies remains unfulfilled without practical deployment strategies that address the unique challenges of underserved regions.

Key Takeaways

- 🌿 TinyML and optical AI chips enable real-time species identification in remote areas without requiring internet connectivity or grid electricity

- ⚡ Energy efficiency breakthroughs in optical neural networks use light instead of electricity, dramatically reducing power consumption for field deployments

- 🌍 Equitable access challenges remain central to deployment success, requiring strategies that work with limited digital infrastructure in underserved regions

- 📊 Edge computing systems are transitioning biodiversity monitoring from passive data collection to active, real-time ecological alert capabilities

- 🤝 Community-centered deployment models integrate local knowledge with advanced technology to create sustainable monitoring networks

Understanding Optical AI Chips and TinyML Technology for Biodiversity Applications

What Makes Optical AI Different

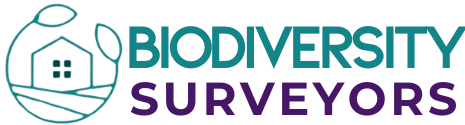

Traditional artificial intelligence systems consume enormous amounts of energy, making them impractical for remote biodiversity surveys where power sources are scarce or nonexistent. Optical AI chips represent a fundamental shift in how computational processing occurs. Instead of using electricity to transfer information through silicon circuits, these revolutionary devices use the characteristics of light itself—photons traveling through optical waveguides and photonic processors.[2]

The energy efficiency gains are remarkable. Light-powered optical chip technologies enhance both energy efficiency and processing speed simultaneously, with optical neural network technologies capable of further accelerating processing performance.[2] Recent breakthroughs from CU Boulder researchers in February 2026 demonstrated microscopic "racetracks" that trap and amplify light with exceptional efficiency, representing concrete technological advancement in optical device development.[3]

TinyML: AI That Fits in Your Palm

Tiny Machine Learning (TinyML) refers to machine learning models optimized to run on microcontrollers and small embedded devices—often no larger than a coin. Unlike cloud-based AI that requires constant internet connectivity, TinyML performs all processing locally on the device itself. This capability is transformative for biodiversity monitoring in remote regions where cellular networks and WiFi simply don't exist.

A comprehensive analysis of 82 peer-reviewed studies published between 2017-2025 reveals growing adoption of edge computing technologies for biodiversity monitoring across acoustic, vision-based, tracking, and multi-modal systems.[5] These systems can:

- Identify species from images, sounds, or environmental DNA samples

- Count populations automatically without human intervention

- Detect rare events like endangered species appearances

- Operate continuously for months on solar power or batteries

- Function offline in areas with zero connectivity

The Convergence: Why 2026 Matters

The convergence of optical AI and TinyML creates unprecedented opportunities for conservation science. Optical chips provide the energy-efficient processing power, while TinyML frameworks compress sophisticated AI models into formats that can run on these low-power processors. Together, they enable real-time biodiversity detection capabilities that were impossible just years ago.

However, horizon scan authors caution that it remains unclear whether efficiency gains will outpace increased AI use sufficiently to mitigate broader environmental impacts.[2] This uncertainty makes thoughtful deployment strategies even more critical for maximizing conservation benefits while minimizing technological footprints.

Deployment Challenges in Underserved Regions

Infrastructure Limitations

Deploying advanced technology in underserved regions presents unique obstacles that don't exist in well-connected areas. The 2026 horizon scan explicitly identifies ensuring that TinyML and optical AI technologies work across users with limited digital infrastructure as central to equitable benefit-sharing.[1]

Common infrastructure challenges include:

| Challenge | Impact | Technology Solution |

|---|---|---|

| No grid electricity | Devices cannot operate | Solar panels + optical AI's ultra-low power consumption |

| Zero internet connectivity | Cannot upload data or update models | TinyML's offline processing + periodic manual data collection |

| Limited technical expertise | Devices cannot be maintained | Simplified interfaces + community training programs |

| Harsh environmental conditions | Equipment failure | Weatherproof housings + robust optical components |

| Supply chain difficulties | Cannot replace broken parts | Modular designs + local repair networks |

The Digital Divide in Conservation

The promise of Optical AI Chips and Low-Power TinyML in Remote Biodiversity Surveys: 2026 Deployment Strategies for Underserved Regions can only be realized through deliberate efforts to bridge the digital divide. Technologies developed for well-resourced institutions in wealthy nations often fail when transplanted to resource-constrained settings without adaptation.

A UK-based AI project developing computer vision tools to automatically identify and count barnacles, macroalgae, and invertebrates from thousands of images demonstrates the sophistication possible with advanced deep learning approaches including convolutional neural networks and vision transformers.[4] Yet these same approaches require careful optimization to function on TinyML devices in remote field conditions.

Community Engagement and Local Knowledge

Successful deployment strategies recognize that technology alone never solves conservation challenges. The most effective biodiversity monitoring systems integrate local ecological knowledge with advanced sensing capabilities, creating partnerships where communities become active participants rather than passive subjects of monitoring.

Autonomous eco-acoustic networks deployed in Borneo's tropical rainforests utilize solar-powered Raspberry Pi units that continuously record audio and transmit files over mobile networks for large-scale soundscape analysis, demonstrating proven deployment models in underserved regions.[5] These systems succeed because they involve local communities in installation, maintenance, and data interpretation.

Practical 2026 Deployment Strategies

Strategy 1: Solar-Powered Optical AI Sensor Networks

The foundation of successful remote deployment combines solar energy harvesting with optical AI's minimal power requirements. Modern photovoltaic panels can generate sufficient electricity even under forest canopies, while optical neural networks consume a fraction of the power required by traditional processors.

Implementation steps:

- Site assessment – Evaluate solar exposure, species diversity, and accessibility

- Hardware selection – Choose optical AI chips optimized for target species detection

- Power system design – Size solar panels and battery storage for worst-case weather conditions

- Weatherproofing – Protect sensitive optical components from moisture and temperature extremes

- Installation training – Equip local teams with skills to deploy and maintain systems

Edge AI systems are transitioning biodiversity monitoring from passive "collect-upload-analyse" paradigms toward active, real-time alert systems that enable proactive ecological actions as monitoring events unfold.[5] This shift means sensors can immediately detect poaching activities, habitat destruction, or the presence of endangered species, triggering alerts even without internet connectivity through local alarm systems.

Strategy 2: Multimodal Sensing Integration

Emerging edge AI systems are integrating environmental, acoustic, visual, and chemical sensors locally at gateway devices, enabling multimodal models to improve classification confidence and reduce false positives in species identification.[5] This approach is particularly valuable for conducting comprehensive biodiversity impact assessments in complex ecosystems.

Multimodal sensor combinations:

- 📷 Camera traps with optical AI chips for visual species identification

- 🎤 Acoustic monitors using TinyML to recognize bird songs and animal calls

- 🌡️ Environmental sensors tracking temperature, humidity, and air quality

- 💧 eDNA samplers collecting genetic material from water or soil

- 📡 Motion detectors triggering high-resolution recording during activity periods

By processing data from multiple sensor types simultaneously, optical AI systems can achieve higher accuracy than single-modality approaches. A bird detection system might combine visual confirmation with acoustic signature matching, dramatically reducing false positives that plague single-sensor deployments.

Strategy 3: Offline-First Data Architecture

Unlike conventional IoT systems that assume constant connectivity, Optical AI Chips and Low-Power TinyML in Remote Biodiversity Surveys: 2026 Deployment Strategies for Underserved Regions must embrace offline-first architectures that function independently for extended periods.

Key architectural principles:

- Local processing – All AI inference happens on-device using TinyML models

- Efficient storage – Compressed data formats maximize limited onboard memory

- Periodic synchronization – Manual data collection during scheduled site visits

- Opportunistic connectivity – Automatic upload when cellular signal becomes available

- Data prioritization – Critical detections (rare species, threats) stored preferentially

While animal tracking data flows through platforms like Movebank, comparable standardized pipelines for acoustic or camera-trap AI inferences remain rare, with BirdWeather noted as a notable exception, representing a current infrastructure gap.[5] Deployment strategies must account for this lack of standardized data infrastructure by building robust local storage and eventual synchronization capabilities.

Strategy 4: Community-Centered Training Programs

Technology deployment succeeds or fails based on local capacity building. The most sophisticated optical AI systems become useless if communities lack the knowledge to operate, maintain, and interpret them.

Effective training program components:

- Hands-on workshops – Practical experience installing and configuring devices

- Troubleshooting skills – Diagnosing common problems and performing repairs

- Data interpretation – Understanding AI outputs and ecological significance

- Maintenance protocols – Regular cleaning, battery checks, and component replacement

- Knowledge exchange – Integrating traditional ecological knowledge with AI insights

These programs should recognize existing expertise within communities. Local guides, rangers, and indigenous knowledge holders often possess sophisticated understanding of species behavior, habitat patterns, and seasonal variations that enhance AI system effectiveness when properly integrated.

Strategy 5: Adaptive Model Optimization

Researchers are employing contrastive learning, anomaly detection, and synthetic data augmentation to address challenges such as similar species, subtle life-stage differences, and irregular specimens in real-world biodiversity monitoring scenarios.[4] These advanced techniques enable TinyML models to function effectively despite the limited training data often available for rare or understudied species.

Optimization approaches for field deployment:

- Transfer learning – Starting with models trained on abundant species, then fine-tuning for local species

- Few-shot learning – Training effective classifiers from minimal example images

- Continual learning – Models that improve over time as they encounter new examples

- Federated learning – Combining insights from multiple deployment sites without centralizing data

- Human-in-the-loop – Local experts verify uncertain classifications, improving model accuracy

This adaptive approach aligns with broader biodiversity net gain strategies that emphasize continuous improvement and evidence-based conservation management.

Strategy 6: Modular and Repairable Design

Supply chain challenges in remote regions mean that specialized components may take months to replace if they fail. Successful deployment strategies prioritize modularity and repairability over cutting-edge performance.

Design principles:

- ✅ Standardized components – Use widely available parts when possible

- ✅ Hot-swappable modules – Replace failed components without specialized tools

- ✅ Redundant systems – Critical functions have backup capabilities

- ✅ Local repair networks – Train technicians in regional hubs

- ✅ Open documentation – Detailed repair manuals in local languages

Strategy 7: Phased Deployment and Iteration

Rather than attempting large-scale deployment immediately, successful strategies begin with pilot projects that allow for learning and adaptation. This approach reduces risk while building local capacity and demonstrating value to stakeholders.

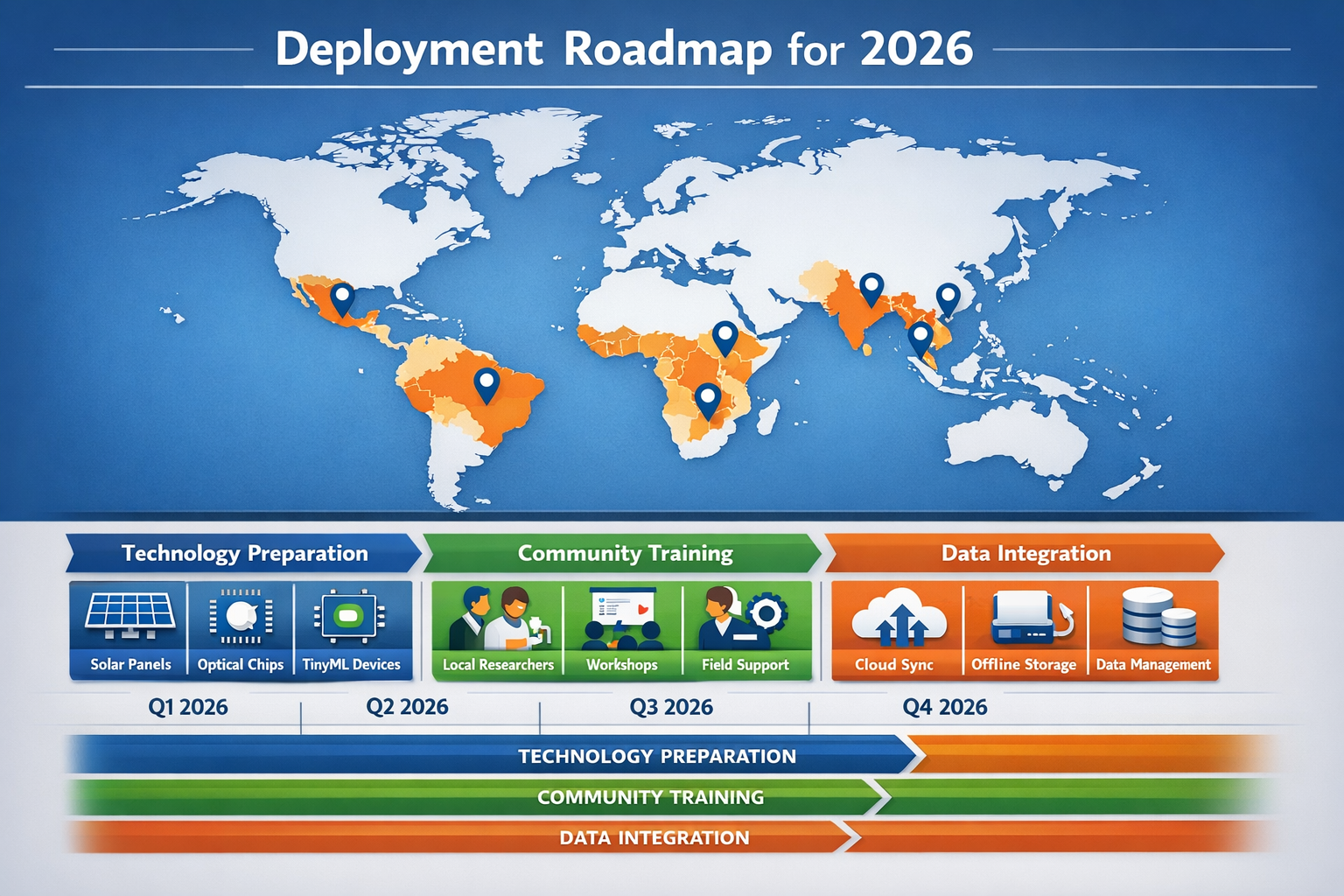

Recommended phased approach:

Phase 1 (Months 1-3): Pilot deployment at 2-3 accessible sites with strong community partnerships

Phase 2 (Months 4-6): Evaluate performance, gather feedback, refine hardware and training approaches

Phase 3 (Months 7-12): Expand to 10-15 sites with trained local teams leading installation

Phase 4 (Year 2+): Scale to full network with community ownership and sustainable funding

This iterative approach mirrors best practices in achieving biodiversity net gain through adaptive management and stakeholder engagement.

Real-World Applications and Case Studies

Tropical Rainforest Acoustic Monitoring

The Borneo deployment of solar-powered acoustic sensors demonstrates how Optical AI Chips and Low-Power TinyML in Remote Biodiversity Surveys: 2026 Deployment Strategies for Underserved Regions function in practice. These systems continuously record soundscapes, with TinyML models identifying specific species calls in real-time while storing raw audio for later analysis.[5]

The offline-first architecture allows units to operate for months without human intervention, only requiring periodic visits to download accumulated data. Local communities participate in installation and receive training in acoustic ecology, creating employment opportunities while advancing conservation science.

Marine Biodiversity Computer Vision

The UK MarClim project's development of AI-driven computer vision for automated monitoring of marine biodiversity showcases the potential for optical AI in coastal environments. By processing 3,000 images annually across 20 survey sites, the system identifies and counts barnacles, macroalgae, and invertebrates with accuracy approaching human experts.[4]

Adapting this approach for deployment in underserved coastal regions requires optimizing the deep learning models to run on TinyML hardware, enabling local communities to monitor their marine resources without expensive laboratory infrastructure. This democratization of monitoring capability supports community-based conservation efforts worldwide.

Endangered Species Early Warning Systems

Edge AI systems enable proactive conservation by detecting endangered species appearances or threats in real-time. A camera trap equipped with optical AI chips and TinyML can identify a critically endangered species within seconds of capture, triggering local alerts through visual or audible signals even without internet connectivity.

This immediate feedback allows rangers to respond to poaching threats, document rare species behaviors, or protect nesting sites before disturbance occurs. The shift from passive data collection to active ecological intervention represents a fundamental advancement in conservation technology.

Addressing Equity and Access Concerns

Technology Transfer and Capacity Building

Ensuring equitable access to Optical AI Chips and Low-Power TinyML in Remote Biodiversity Surveys: 2026 Deployment Strategies for Underserved Regions requires deliberate technology transfer programs that build lasting local capacity rather than creating dependency on external experts.

Equity-centered approaches include:

- 🤝 Co-development partnerships – Involving local communities in technology design from the beginning

- 📚 Open-source frameworks – Using freely available TinyML platforms like TensorFlow Lite Micro

- 💰 Sustainable funding models – Connecting monitoring to biodiversity credit markets that generate revenue

- 🎓 Educational pathways – Creating training opportunities leading to employment in conservation technology

- 🌐 South-South knowledge exchange – Facilitating learning between underserved regions facing similar challenges

Data Sovereignty and Ownership

As biodiversity monitoring generates valuable data about species distributions, ecosystem health, and natural resources, questions of data ownership and control become critical. Deployment strategies must respect indigenous rights and ensure communities maintain sovereignty over information collected from their territories.

Best practices for data governance:

- Communities control data access and sharing decisions

- Traditional knowledge is protected from exploitation

- Benefits from data use (scientific publications, conservation funding) flow back to communities

- Data storage and processing respect local laws and cultural protocols

- Transparent agreements established before deployment begins

Avoiding Technological Colonialism

The history of conservation is marked by extractive relationships where wealthy institutions collected data from biodiverse regions without meaningful local benefit. Optical AI Chips and Low-Power TinyML in Remote Biodiversity Surveys: 2026 Deployment Strategies for Underserved Regions must actively counter this pattern through genuine partnership models.

This means prioritizing community needs and questions over external research agendas, investing in local technical capacity rather than flying in experts, and ensuring that monitoring systems serve local conservation priorities first. When communities own and control the technology, biodiversity monitoring becomes a tool for self-determination rather than external surveillance.

Future Directions and Emerging Opportunities

Integration with Biodiversity Markets

As biodiversity credit markets mature, the real-time monitoring capabilities enabled by optical AI and TinyML create opportunities for verifiable, continuous assessment of conservation outcomes. Projects that buy or sell biodiversity units increasingly demand robust monitoring data to verify claims and track progress toward biodiversity net gain targets.

Optical AI sensors deployed in habitat restoration sites can automatically document species colonization, vegetation recovery, and ecosystem function improvements—providing the evidence base needed for credible biodiversity credits. This connection between monitoring technology and conservation finance creates sustainable funding streams for underserved regions.

Standardization and Interoperability

The current lack of standardized data pipelines for AI-based biodiversity monitoring represents both a challenge and an opportunity. As deployment scales in 2026, establishing common data formats, model architectures, and quality standards will enable global integration while preserving local flexibility.

Emerging standards should prioritize:

- Model portability – TinyML models that work across different hardware platforms

- Data compatibility – Consistent formats enabling cross-project analysis

- Quality metrics – Standardized accuracy and reliability reporting

- Ethical guidelines – Shared principles for equitable deployment

- Open protocols – Preventing vendor lock-in and supporting innovation

Advanced Multimodal Integration

Future systems will increasingly combine optical AI processing with multiple sensor modalities, creating comprehensive ecosystem monitoring platforms. A single solar-powered gateway device might integrate:

- Visual species identification through camera traps

- Acoustic monitoring of birds, bats, and insects

- Environmental sensors tracking microclimate

- eDNA collection and preliminary analysis

- Chemical sensors detecting pollutants or fires

By processing all these data streams locally using optical neural networks, the system can build sophisticated ecological models without requiring cloud connectivity—a true edge intelligence approach to conservation science.

Conclusion

Optical AI Chips and Low-Power TinyML in Remote Biodiversity Surveys: 2026 Deployment Strategies for Underserved Regions represent more than just technological advancement—they embody a fundamental shift toward equitable, community-centered conservation monitoring. The convergence of energy-efficient optical computing and offline-capable machine learning creates unprecedented opportunities to understand and protect biodiversity in the world's most remote and underserved ecosystems.

However, technology alone never solves conservation challenges. Success requires deployment strategies that prioritize local capacity building, respect community sovereignty over data and resources, and integrate traditional ecological knowledge with advanced sensing capabilities. The seven strategies outlined—solar-powered sensor networks, multimodal integration, offline-first architecture, community training, adaptive optimization, modular design, and phased deployment—provide a practical framework for realizing this vision in 2026.

Actionable Next Steps

For conservation organizations:

- Pilot optical AI and TinyML systems at 2-3 sites with strong community partnerships

- Invest in local technical training programs that create lasting capacity

- Establish data governance frameworks that respect community sovereignty

- Connect monitoring systems to biodiversity net gain frameworks for sustainable funding

For technology developers:

- Design optical AI chips specifically optimized for conservation applications

- Create open-source TinyML frameworks accessible to underserved regions

- Prioritize modularity, repairability, and extreme energy efficiency

- Engage communities in co-development from initial design stages

For policymakers and funders:

- Support technology transfer programs that build local ownership

- Fund research on equitable deployment models and impact assessment

- Establish standards that prevent technological colonialism

- Create incentives linking verified monitoring to conservation finance

For local communities and practitioners:

- Engage with pilot projects to shape technology to local needs

- Document traditional ecological knowledge that enhances AI systems

- Build regional networks for shared learning and technical support

- Advocate for data sovereignty and equitable benefit-sharing

The biodiversity crisis demands urgent action, but that urgency cannot justify extractive or inequitable approaches. By deploying optical AI chips and low-power TinyML through strategies centered on community empowerment, data sovereignty, and genuine partnership, 2026 can mark a turning point toward truly equitable conservation technology that serves both nature and the communities who protect it.

References

[1] Whats Next For Biodiversity Conservation Insights From The 2026 Horizon Scan – https://www.unep-wcmc.org/en/news/whats-next-for-biodiversity-conservation-insights-from-the-2026-horizon-scan

[2] Conservation Horizon Scan Ai Drought Climate Change Tropical Forests Seaweed Southern Ocean – https://www.theinvadingsea.com/2026/01/02/conservation-horizon-scan-ai-drought-climate-change-tropical-forests-seaweed-southern-ocean/

[3] sciencedaily – https://www.sciencedaily.com/releases/2026/02/260224015540.htm

[4] 2026 Lu06 Ai Driven Computer Vision For Automated Monitoring Of Marine Biodiversity And Climate Impacts – https://centa.ac.uk/studentship/2026-lu06-ai-driven-computer-vision-for-automated-monitoring-of-marine-biodiversity-and-climate-impacts/

[5] arxiv – https://arxiv.org/html/2602.13496v1