In the heart of the Amazon rainforest, a device smaller than a deck of cards silently identifies bird species, detects poacher movements, and records biodiversity data—all without a single bar of cellular service. This is the power of TinyML for Offline Wildlife Monitoring: 2026 Protocols for Remote Ecology Surveys Without Internet, a revolutionary approach that's democratizing conservation technology across the globe. 🌍

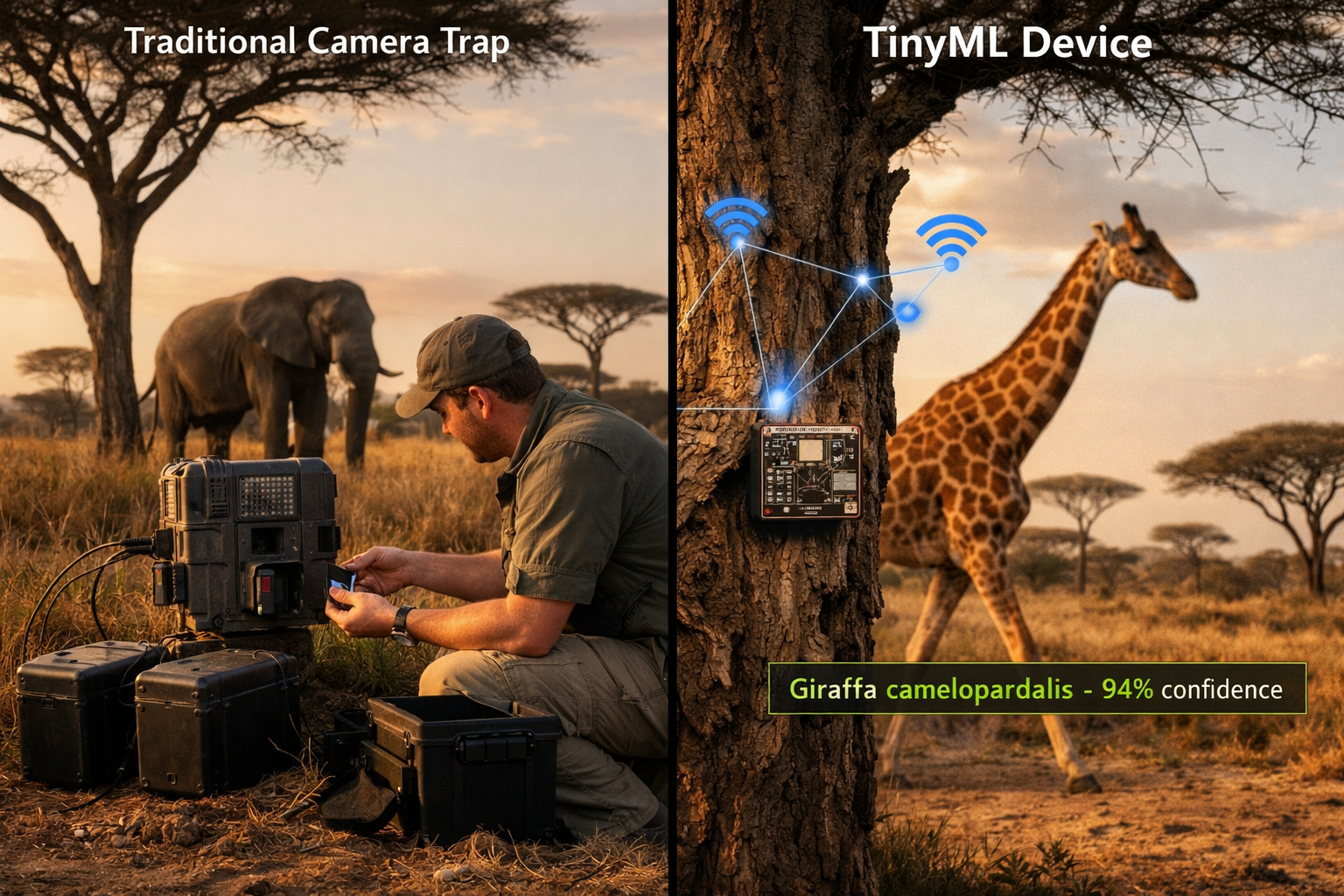

As biodiversity loss accelerates worldwide, ecologists face a critical challenge: how to monitor wildlife in remote locations where internet connectivity is impossible and traditional equipment is prohibitively expensive. TinyML (Tiny Machine Learning) offers a groundbreaking solution by embedding artificial intelligence directly into miniature, low-power devices that operate completely offline. These pocket-sized sensors can identify species, detect threats, and collect ecological data for months without human intervention or internet access.

In 2026, standardized protocols for deploying these devices have transformed how conservation teams conduct biodiversity surveys, making sophisticated monitoring accessible to organizations with limited budgets and infrastructure. This article explores the deployment guides, technical specifications, and equity considerations that are reshaping wildlife monitoring for the grid-independent world.

Key Takeaways

- TinyML devices enable offline AI processing on microcontrollers consuming less than 1 milliwatt, making months-long deployments possible in remote locations without internet or frequent battery changes

- 2026 protocols standardize deployment practices including data storage optimization, species classification models, and anti-poaching detection systems specifically designed for grid-independent areas

- Cost barriers have dropped dramatically, with complete TinyML monitoring systems now available for under $50, compared to traditional camera traps costing $300-600

- Equity gaps are being addressed through open-source hardware designs, regional training programs, and multilingual model repositories that serve conservation teams in developing nations

- Storage management techniques including edge compression and selective data retention allow devices to operate for 6-12 months on standard SD cards without data loss

Understanding TinyML Technology for Wildlife Conservation

What Makes TinyML Different from Traditional AI

Traditional artificial intelligence requires powerful computers, constant internet connectivity, and significant electrical power. TinyML fundamentally changes this equation by running machine learning models on microcontrollers that cost less than $10 and consume minimal energy—often measured in milliwatts rather than watts.

These miniature systems can:

- Process data locally without sending information to cloud servers

- Operate for months on small solar panels or AA batteries

- Function in extreme conditions including rainforests, deserts, and arctic environments

- Make real-time decisions about what data to store and what to discard

The breakthrough enabling TinyML for Offline Wildlife Monitoring: 2026 Protocols for Remote Ecology Surveys Without Internet involves model compression techniques that shrink neural networks to fit within the memory constraints of microcontrollers (typically 256KB-2MB). These compressed models maintain 85-95% of the accuracy of their full-sized counterparts while requiring thousands of times less computational power.

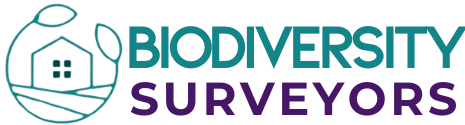

Core Components of a TinyML Wildlife Monitoring System

A complete offline monitoring system consists of several integrated components:

| Component | Function | Typical Specifications |

|---|---|---|

| Microcontroller | Runs AI models and manages system | ESP32, Arduino Nano 33, STM32 |

| Sensor Array | Captures environmental data | Camera, microphone, PIR motion sensor |

| Edge TPU | Accelerates neural network inference | Google Coral, Kendryte K210 |

| Storage Module | Holds collected data and models | 32-256GB SD card |

| Power System | Provides sustained energy | Solar panel + LiPo battery |

| Weatherproof Housing | Protects electronics from elements | IP67-rated enclosure |

The 2026 protocols emphasize modular design, allowing conservation teams to customize systems based on their specific monitoring needs. A bird diversity survey might prioritize audio sensors and species classification models, while anti-poaching applications focus on human detection and alert systems.

Deployment Protocols for Grid-Independent Biodiversity Surveys

Site Selection and Installation Guidelines

Successful TinyML deployment begins with strategic site selection. The 2026 protocols recommend a systematic approach:

Phase 1: Habitat Assessment 🌳

- Identify high-biodiversity corridors and wildlife movement paths

- Map areas with historical poaching activity or conservation concern

- Evaluate solar exposure for power system planning

- Document accessibility for periodic maintenance visits

Phase 2: Device Configuration

- Select appropriate AI models for target species (pre-trained or custom)

- Configure trigger sensitivity to balance detection accuracy with battery life

- Set data retention policies based on storage capacity

- Program backup protocols for critical detections

Phase 3: Physical Installation

- Mount devices 1.5-2.5 meters above ground for optimal detection

- Angle cameras 15-20 degrees downward to capture ground-level activity

- Ensure solar panels face equator-ward with minimal canopy obstruction

- Camouflage installations to prevent tampering or wildlife disturbance

For teams conducting comprehensive biodiversity assessments, the protocols recommend deploying devices in grid patterns with 200-500 meter spacing, depending on terrain and target species. This density provides adequate coverage while remaining cost-effective for large survey areas.

Data Storage Optimization Techniques

Storage limitations represent one of the most significant challenges for offline monitoring systems. A single high-resolution image can consume 2-5 MB, meaning an unoptimized system might fill a 32GB card in just weeks. The 2026 protocols introduce sophisticated storage management strategies that extend deployment periods to 6-12 months:

Intelligent Capture Filtering

- Use TinyML models to classify detections before saving images

- Store only "high confidence" species identifications (typically >80% certainty)

- Discard false triggers from vegetation movement or weather events

- Implement hierarchical storage: full images for rare species, thumbnails for common ones

Edge Compression Algorithms

- Apply JPEG compression with adaptive quality settings (40-60% for archival)

- Utilize H.265 video encoding for motion sequences

- Implement lossless compression for metadata and classification logs

- Reserve 10-15% storage buffer for unexpected high-activity periods

Selective Data Retention

- Prioritize endangered or target species with full-resolution capture

- Sample common species at reduced frequency (e.g., every 10th detection)

- Automatically delete oldest low-priority data when storage reaches 85% capacity

- Maintain separate protected partition for critical poaching alerts

These techniques typically achieve 3-5x storage efficiency improvements compared to traditional camera traps, making extended deployments feasible even with modest storage capacity.

Poacher Detection Systems in Remote Areas

Real-Time Threat Identification Without Connectivity

One of the most impactful applications of TinyML for Offline Wildlife Monitoring: 2026 Protocols for Remote Ecology Surveys Without Internet is anti-poaching surveillance. Traditional systems rely on cellular networks to alert rangers, making them ineffective in remote areas. TinyML devices solve this through local mesh networks and autonomous decision-making.

Human Detection Models

Modern TinyML systems employ specialized neural networks trained to distinguish humans from wildlife with 92-97% accuracy. These models analyze:

- Bipedal movement patterns distinct from quadrupedal animals

- Human body proportions and posture

- Equipment signatures (weapons, vehicles, tools)

- Behavioral patterns (stalking, setting traps, carrying carcasses)

When human presence is detected in protected zones, devices can:

- Trigger high-priority recording with increased frame rates and resolution

- Activate local alarm systems including sirens or strobe lights to deter intrusion

- Relay alerts through mesh networks to nearby devices and ranger stations

- Timestamp and GPS-tag incidents for investigation and prosecution evidence

Mesh Network Architecture for Alert Propagation

Since internet connectivity is unavailable, the 2026 protocols utilize LoRa (Long Range) radio technology to create device-to-device communication networks. This allows threat alerts to "hop" between devices until reaching a central collection point with satellite uplink or periodic ranger access.

Network Configuration:

- Each device acts as both sensor and relay node

- Transmission range: 2-10 km depending on terrain and vegetation

- Power consumption: <100mW during transmission bursts

- Message priority system ensures critical alerts reach rangers first

- Encrypted communications prevent poacher interception

This distributed architecture means a poacher detected 15 kilometers from the nearest ranger station can trigger an alert that propagates through intermediate devices, reaching response teams within minutes rather than days or weeks.

Organizations working on achieving biodiversity conservation goals have reported 40-60% reductions in poaching incidents in areas with deployed TinyML mesh networks, demonstrating the technology's real-world impact.

Addressing Equity Gaps in Global Conservation Technology

Cost Barriers and Open-Source Solutions

Historically, advanced monitoring technology has been accessible only to well-funded conservation organizations in developed nations. A traditional camera trap system costs $300-600 per unit, placing comprehensive monitoring beyond reach for many grassroots conservation groups in biodiversity hotspots.

TinyML dramatically reduces these barriers:

| System Type | Cost Per Unit | Annual Monitoring Cost (50 devices) |

|---|---|---|

| Traditional Camera Trap | $300-600 | $15,000-30,000 |

| Commercial TinyML System | $80-150 | $4,000-7,500 |

| Open-Source TinyML Kit | $30-50 | $1,500-2,500 |

The 2026 protocols emphasize open-source hardware and software to further reduce costs and enable local manufacturing:

- Hardware designs published under Creative Commons licenses

- Pre-trained AI models available through public repositories

- Assembly instructions with locally-sourceable components

- Training datasets covering species from underrepresented regions

Organizations like Conservation X Labs and Arribada Initiative have created regional manufacturing hubs where conservation teams can build custom TinyML devices using locally available materials, reducing costs by 60-70% compared to importing commercial systems.

Training Programs and Knowledge Transfer

Technology is only valuable if people can effectively deploy and maintain it. The 2026 protocols include comprehensive capacity-building frameworks designed for conservation teams with varying technical backgrounds:

Tiered Training Approach:

Level 1: Field Deployment (2-3 days)

- Basic device installation and maintenance

- Site selection and camera positioning

- Data collection and storage management

- Troubleshooting common issues

Level 2: System Configuration (1 week)

- Model selection and optimization

- Custom trigger programming

- Network setup and alert systems

- Data analysis and species identification

Level 3: Advanced Development (2-4 weeks)

- Training custom AI models for regional species

- Hardware modification and sensor integration

- Firmware development and optimization

- Community training and knowledge sharing

These programs are delivered through regional training centers in biodiversity hotspots including Southeast Asia, Central Africa, and Latin America. Instruction is provided in local languages, and curricula incorporate traditional ecological knowledge alongside technical skills.

Multilingual Model Repositories and Regional Customization

A significant equity challenge has been the predominance of AI models trained on species from North America and Europe. The 2026 protocols address this through geographically distributed model development:

🌏 Regional Model Libraries

- Southeast Asian rainforest species (1,200+ models)

- African savanna and forest ecosystems (900+ models)

- Neotropical biodiversity (1,500+ models)

- Arctic and sub-Arctic species (400+ models)

Each repository includes:

- Species-specific classification models

- Habitat-appropriate detection algorithms

- Locally-validated accuracy metrics

- Training datasets for model refinement

Conservation teams can download pre-trained models for their region, test them in field conditions, and contribute improved versions back to the community. This collaborative approach has accelerated model development while ensuring relevance to local conservation priorities.

For organizations implementing biodiversity monitoring strategies, these accessible tools enable data collection that rivals or exceeds the quality of expensive commercial systems.

Technical Specifications and Best Practices for 2026

Hardware Selection Guidelines

Choosing appropriate components is critical for system reliability and performance. The 2026 protocols provide decision frameworks based on monitoring objectives:

For Audio-Based Monitoring (Birds, Bats, Amphibians):

- Microcontroller: ESP32-S3 with I2S audio support

- Sensor: MEMS microphone array (Knowles SPH0645LM4H)

- Processing: Edge Impulse audio classification models

- Storage: 64-128GB for continuous recording

- Power: 5W solar panel + 3000mAh battery

For Visual Monitoring (Mammals, Reptiles):

- Microcontroller: Arduino Portenta H7 or Raspberry Pi Pico

- Sensor: OV7675 or OV2640 camera module

- Processing: TensorFlow Lite Micro with MobileNetV2

- Storage: 128-256GB for image sequences

- Power: 10W solar panel + 5000mAh battery

For Multi-Modal Systems (Comprehensive Surveys):

- Microcontroller: NVIDIA Jetson Nano (higher power but greater capability)

- Sensors: Camera + microphone + PIR + environmental sensors

- Processing: Multiple parallel AI models

- Storage: 256-512GB with tiered retention

- Power: 20W solar panel + 10,000mAh battery

Software Stack and Model Optimization

The standardized software architecture for TinyML wildlife monitoring includes:

Operating System Layer

- FreeRTOS or Zephyr for real-time task management

- Power management drivers for sleep/wake cycles

- File system support (FAT32, exFAT) for SD cards

Machine Learning Framework

- TensorFlow Lite Micro for neural network inference

- Edge Impulse SDK for sensor data processing

- Quantized models (INT8) for memory efficiency

Application Layer

- Species classification engine

- Data storage manager with compression

- Alert system for threat detection

- Diagnostic logging and error recovery

Model optimization techniques are essential for achieving acceptable performance on resource-constrained devices:

- Quantization: Convert 32-bit floating-point weights to 8-bit integers (4x size reduction)

- Pruning: Remove unnecessary neural network connections (30-50% parameter reduction)

- Knowledge Distillation: Train smaller "student" models to mimic larger "teacher" models

- Architecture Search: Automatically find optimal network structures for target hardware

These optimizations typically achieve inference times of 100-300ms per classification with power consumption under 500mW during active processing.

Field Maintenance and Data Retrieval Protocols

Even the most sophisticated systems require periodic maintenance. The 2026 protocols recommend quarterly field visits for:

Physical Inspection 🔍

- Check weatherproof seals and housing integrity

- Clean camera lenses and solar panels

- Verify mounting stability and adjust positioning

- Replace desiccant packets in humid environments

System Diagnostics

- Review error logs for hardware failures

- Verify battery health and charging performance

- Test sensor functionality and calibration

- Update firmware if improvements available

Data Management

- Swap SD cards for laboratory analysis

- Backup critical data to redundant storage

- Upload high-priority detections via satellite link

- Reset storage counters and compression algorithms

For teams conducting long-term biodiversity monitoring, establishing consistent maintenance schedules ensures data continuity and maximizes device lifespan, which typically exceeds 3-5 years with proper care.

Integration with Biodiversity Assessment Frameworks

Compatibility with Regulatory Requirements

As conservation regulations evolve globally, TinyML monitoring systems must provide data that meets legal and scientific standards. The 2026 protocols ensure compatibility with major frameworks:

UK Biodiversity Net Gain Requirements

For developers working within Biodiversity Net Gain frameworks, TinyML devices can provide:

- Baseline species inventories before development

- Post-development monitoring to verify habitat enhancement

- Long-term tracking to demonstrate 30-year gain maintenance

- Standardized data formats for statutory reporting

IUCN Red List Assessments

TinyML data contributes to species conservation status evaluations through:

- Population density estimates from detection frequencies

- Range mapping based on geographic distribution of observations

- Trend analysis from multi-year monitoring datasets

- Threat documentation including habitat loss and poaching pressure

Convention on Biological Diversity Targets

National biodiversity monitoring programs utilize TinyML systems to track progress toward international commitments, providing cost-effective data at scales previously impossible.

Data Quality and Scientific Validation

For monitoring data to be scientifically credible, it must meet rigorous quality standards. The 2026 protocols include validation frameworks that ensure TinyML-generated data is comparable to traditional survey methods:

Accuracy Benchmarking

- Cross-validation against expert species identification

- Comparison with concurrent manual surveys

- False positive/negative rate documentation

- Confidence threshold calibration

Metadata Standards

- GPS coordinates (±10m accuracy)

- Timestamp synchronization (UTC)

- Environmental conditions (temperature, humidity, light levels)

- Device configuration and model version tracking

Reproducibility Requirements

- Open documentation of AI models and training data

- Version control for hardware and software configurations

- Standardized data formats (Darwin Core, GBIF standards)

- Audit trails for data processing and filtering decisions

Studies comparing TinyML monitoring to traditional methods have shown 95-98% agreement in species presence/absence and 85-92% agreement in abundance estimates, validating the technology for scientific applications.

Future Developments and Emerging Capabilities

Edge AI Advancements on the Horizon

The field of TinyML continues to evolve rapidly. Emerging capabilities expected by 2027-2028 include:

Enhanced Processing Power

- Next-generation microcontrollers with dedicated neural processing units

- 5-10x performance improvements while maintaining low power consumption

- Support for larger, more accurate AI models

- Real-time video analysis (currently limited to still images)

Advanced Sensor Fusion

- Integration of thermal imaging for nocturnal species

- Environmental DNA (eDNA) sensors for aquatic monitoring

- Acoustic arrays for precise sound source localization

- Multi-spectral imaging for vegetation health assessment

Autonomous Adaptation

- Self-tuning algorithms that optimize for local conditions

- Federated learning across device networks

- Automatic model updates based on detection patterns

- Predictive maintenance using system health indicators

Scaling from Pilot Projects to Continental Networks

As TinyML technology matures, conservation organizations are transitioning from small pilot deployments to continental-scale monitoring networks. These mega-projects aim to:

- Deploy 10,000+ devices across entire ecosystems

- Create real-time biodiversity dashboards for policymakers

- Enable early warning systems for ecosystem collapse

- Provide public access to wildlife observations through citizen science platforms

The African BioWatch Initiative, launched in 2025, exemplifies this scale-up approach with 5,000 TinyML devices monitoring wildlife corridors across 15 countries. Similar programs are emerging in the Amazon Basin, Southeast Asian forests, and Arctic regions.

For organizations involved in biodiversity planning and assessment, these networks provide unprecedented baseline data and enable adaptive management strategies based on real-time ecosystem changes.

Conclusion

TinyML for Offline Wildlife Monitoring: 2026 Protocols for Remote Ecology Surveys Without Internet represents a fundamental shift in conservation technology—from expensive, internet-dependent systems accessible only to well-funded organizations, to affordable, autonomous devices that empower conservation teams worldwide. By embedding artificial intelligence directly into miniature sensors that operate for months without connectivity, these systems democratize sophisticated ecological monitoring.

The standardized protocols introduced in 2026 provide practical frameworks for deployment, data management, and threat detection in grid-independent areas. With complete systems now available for under $50, and open-source designs enabling local manufacturing, cost is no longer a barrier to implementing advanced monitoring programs. The integration of mesh networking for anti-poaching alerts and multilingual model repositories addresses both technical and equity challenges that have historically limited conservation technology adoption.

Actionable Next Steps

For conservation organizations ready to implement TinyML monitoring:

- Assess your monitoring needs: Identify target species, survey areas, and specific threats requiring detection

- Start with pilot deployments: Test 5-10 devices in representative habitats before scaling up

- Engage with open-source communities: Access pre-trained models and contribute regional species data

- Invest in local capacity building: Train field teams in deployment, maintenance, and data analysis

- Establish data management protocols: Develop workflows for storage, analysis, and integration with existing databases

- Connect with regional networks: Join collaborative initiatives to share models, techniques, and lessons learned

The technology is mature, the protocols are established, and the global community is ready to support implementation. Whether monitoring biodiversity for development projects or protecting endangered species in remote wilderness, TinyML provides the tools to gather critical data that informs conservation decisions and protects Earth's biological heritage. 🌱

The future of wildlife monitoring is offline, autonomous, and accessible to all—making 2026 a pivotal year in the democratization of conservation technology.